Talk: Wikipedia from the World: Grounded Articles from Any Source, 11/24

4-5:15 pm EST Monday, Nov. 24, 2025 in ITE 229 & Online

Wikipedia from the World: Grounded Articles from Any Source

Alexander Martin, JHU

4-5:15pm EST Monday, Nov. 24 in ITE229 and online

Whether tracking emerging events, analyzing economic trends, or understanding public discourse, valuable information is scattered across modalities, from professionally produced news content and curated Wikipedia articles to firsthand footage of disasters livestreamed on social media. Building systems that can effectively retrieve, reason over, and synthesize these heterogeneous information sources is essential for knowledge-intensive applications.

This talk will focus on advancing both sides of the information-seeking pipeline: retrieving relevant multimodal evidence at scale, and synthesizing that evidence into coherent, Wikipedia-style explanations grounded in verifiable evidence. For retrieval, we will focus on recent progress in large-scale

multimodal retrieval, including new dataset, efficient and scalable first-stage retrieves, and reasoning reranking. In Wikipedia-style article generation, we will cover benchmarking and evaluating multimodal article generation and a method for enabling the use of

VLMs for high-level reasoning. Together, these components outline a path toward unified systems capable of transforming large collections of multimodal evidence into verifiable, human-readable articles.

Alexander Martin is a PhD candidate at Johns Hopkins University’s Center for Language and Speech Processing (

CLSP) and Human Language Technology Center of Excellence (

HLTCOE). He is advised by Dr. Benjamin Van Durme. Alex’s research focuses on end-to-end multimodal information retrieval and reasoning. His work aims to produce Wikipedia-style articles, grounded in retrieved documents and videos, in response to information seeking queries. His research has been published in CVPR, ACL, NAACL, and EMNLP. Alex is a recipient of the NSF’s Graduate Research Fellowship.

]]>

Wikipedia from the World: Grounded Articles from Any Source Alexander Martin, JHU 4-5:15pm EST Monday, Nov. 24 in ITE229 and online Whether tracking emerging events, analyzing economic...

https://www.tejasgokhale.com/seminar.html

https://beta.my.umbc.edu/api/v0/pixel/news/154576/guest@my.umbc.edu/44a9d69937b5e480a80dbfeebb8f361b/api/pixel

genai

information-retrieval

llm

multimodal

nlp

talk

vlm

wikipedia

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

UMBC

https://assets4-beta.my.umbc.edu/system/shared/thumbnails/news/000/154/576/737bb9b992cfe9a9f955d8e5875b7ebd/xxlarge.jpg?1763330590

https://assets4-beta.my.umbc.edu/system/shared/thumbnails/news/000/154/576/737bb9b992cfe9a9f955d8e5875b7ebd/xlarge.jpg?1763330590

https://assets2-beta.my.umbc.edu/system/shared/thumbnails/news/000/154/576/737bb9b992cfe9a9f955d8e5875b7ebd/large.jpg?1763330590

https://assets4-beta.my.umbc.edu/system/shared/thumbnails/news/000/154/576/737bb9b992cfe9a9f955d8e5875b7ebd/medium.jpg?1763330590

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/154/576/737bb9b992cfe9a9f955d8e5875b7ebd/small.jpg?1763330590

https://assets4-beta.my.umbc.edu/system/shared/thumbnails/news/000/154/576/737bb9b992cfe9a9f955d8e5875b7ebd/xsmall.jpg?1763330590

https://assets4-beta.my.umbc.edu/system/shared/thumbnails/news/000/154/576/737bb9b992cfe9a9f955d8e5875b7ebd/xxsmall.jpg?1763330590

multimodal information retrieval

0

0

true

Mon, 17 Nov 2025 08:14:06 -0500

Talk: Inductive Analysis of Texts with Embeddings, 11/5

12-1:30pm Wednesday, November 5, 2025, Commons 329

Inductive Analysis of Texts with Embeddings

Word or text embeddings are a central component in modern language models, including those powering generative AI. Embeddings represent word meanings as positions in space, where words that are closer together are used in similar contexts or evoke similar concepts -- even if those words never actually co-occur. We navigate the meaning space created by embeddings directly using basic arithmetic, and in doing so, explore how meaning changes overtime or how meaning differs between different collections of texts.

Dustin Stoltz is an Assistant Professor of Sociology and Cognitive Science at Lehigh University. He studies a variety of topics in cultural and economic sociology and specializes in computational methods. Five copies of his recently published book, Mapping Texts: Computational Text Analysis for the Social Sciences (coauthored with Marshall Taylor), will be raffled off to workshop registrants.

Lunch will be provided for registered attendees.

Hosted by the Center for Social Science Scholarship and cosponsored by the Departments of English; Sociology, Anthropology, & Public Health; Modern Languages, Linguistics, & Intercultural Communication; the Division of Information Technology; the Center for Scalable Data and Computational Science; and CGC-SCIPE.

]]>

Inductive Analysis of Texts with Embeddings Prof. Dustin Stoltz, Lehigh University Word or text embeddings are a central component in modern language models, including those powering generative...

https://my3.my.umbc.edu/groups/csss/events/145563

https://beta.my.umbc.edu/api/v0/pixel/news/153614/guest@my.umbc.edu/26fc0b112f8765b28d76511cf407fd11/api/pixel

ai

analysis

embeddings

nlp

text

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

UMBC AI

https://assets2-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/614/9fbba467d186f849f1710ebc9e61c82f/xxlarge.jpg?1760577891

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/614/9fbba467d186f849f1710ebc9e61c82f/xlarge.jpg?1760577891

https://assets1-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/614/9fbba467d186f849f1710ebc9e61c82f/large.jpg?1760577891

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/614/9fbba467d186f849f1710ebc9e61c82f/medium.jpg?1760577891

https://assets1-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/614/9fbba467d186f849f1710ebc9e61c82f/small.jpg?1760577891

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/614/9fbba467d186f849f1710ebc9e61c82f/xsmall.jpg?1760577891

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/614/9fbba467d186f849f1710ebc9e61c82f/xxsmall.jpg?1760577891

Image of Dustin Stoltz, an Assistant Professor of Sociology and Cognitive Science at Lehigh University.

0

0

true

Thu, 16 Oct 2025 12:31:41 -0400

Sat, 18 Oct 2025 10:03:00 -0400

Talk: Towards Multilingual Evaluations of Knowledge for LLMs

2-3pm EDT Tue., Oct. 14, 2025, ITE 325b, UMBC

Language Technology Seminar Series (LaTeSS)

Towards Multilingual Evaluations of Knowledge for Large Language Models

Bryan Li, University of Pennsylvania

2-3pm Tue., Oct. 14, 2025, ITE 325b, UMBC

Contemporary language models (LMs) support dozens of languages, promising to broaden information access for global users. However, existing multilingual evaluations largely study factual recall tasks, failing to address knowledge-intensive tasks shaped by the uneven coverage and different perspectives of knowledge across languages. This dissertation investigates how LMs handle such tasks by examining their internal parametric knowledge and their use of externally-provided contextual knowledge. In the first part, I introduce benchmarks for complex reasoning and territorial disputes, and find that LM responses on both tasks exhibit a lack of cross-lingual robustness, outputting inconsistent answers to underlying queries written in different languages. I then show that lightweight methods of leveraging program code and persona-based prompting can mitigate these issues.

In the second part, I explore the retrieval-augmented generation (RAG) setting, which combines LM's internal parametric knowledge with contextual knowledge from external knowledge bases (KBs). Focusing on the territorial disputes task, I show that while RAG over single-language or single-source KBs has mixed effects on robustness, retrieving over multilingual and multi-source KBs — Wikipedia, as well as a large-scale dataset of state media articles I collected — substantially boosts robustness. Together, these findings highlight the need for LMs that can navigate, and assist users in navigating, the real-world distribution of knowledge across languages and sources. This is a practice dissertation talk, and your feedback would be greatly appreciated!

Bryan Li is a final-year PhD student at the University of Pennsylvania, advised by Prof. Chris Callison-Burch. His research focuses on multilingual evaluations of LLMs, spanning both the fields of natural language processing and computational social science. His work has appeared in conferences such as ACL, COLM, and ICLR. Outside of research, you can find him in a trendy cafe, a river-side running trail, or at home listening to a good podcast.

]]>

Language Technology Seminar Series (LaTeSS) Towards Multilingual Evaluations of Knowledge for Large Language Models Bryan Li, University of Pennsylvania 2-3pm Tue., Oct. 14, 2025, ITE 325b, UMBC...

https://laramartin.net/LaTeSS

https://beta.my.umbc.edu/api/v0/pixel/news/153403/guest@my.umbc.edu/62abef58462a166d634b00ce2adf44ee/api/pixel

language-model

llm

multilingual

nlp

rag

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

UMBC Language, Aid, and Representation AI Lab

https://assets1-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/403/e97b7ff3606ac8b1622433fd30815d10/xxlarge.jpg?1759949298

https://assets4-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/403/e97b7ff3606ac8b1622433fd30815d10/xlarge.jpg?1759949298

https://assets2-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/403/e97b7ff3606ac8b1622433fd30815d10/large.jpg?1759949298

https://assets2-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/403/e97b7ff3606ac8b1622433fd30815d10/medium.jpg?1759949298

https://assets1-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/403/e97b7ff3606ac8b1622433fd30815d10/small.jpg?1759949298

https://assets1-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/403/e97b7ff3606ac8b1622433fd30815d10/xsmall.jpg?1759949298

https://assets1-beta.my.umbc.edu/system/shared/thumbnails/news/000/153/403/e97b7ff3606ac8b1622433fd30815d10/xxsmall.jpg?1759949298

Bryan Li observing a crash between a vehicle and baloon

0

0

true

Wed, 08 Oct 2025 15:07:02 -0400

UMBC PhD student Ommo Clark wins best paper award with Karuna Joshi

Detecting misinformation with LLMs and Knowledge Graphs

A research paper by UMBC Information Systems PhD student Ommo Clark co-authored with her advisor Professor Karuna Joshi received the Best Student Paper award at the IEEE International Conference on Digital Health held earlier this month in Helsinki as part of the IEEE Services Congress.

The paper addressed the problem of identifying health misinformation on social media platforms, which poses a threat to public health by contributing to hesitancy in vaccines, delayed medical interventions, and the adoption of untested or harmful treatments.

Clark and Joshi evaluated their hybrid approach to combining LLM and knowledge-graph technologies on a dataset of Reddit posts discussing chronic health conditions, and showed the benefits compared to models that only use text or knowledge-graphs. Their paper,

Real-Time Detection of Online Health Misinformation using an Integrated KnowledgeGraph-LLM Approach, is available

here.

]]>

A research paper by UMBC Information Systems PhD student Ommo Clark co-authored with her advisor Professor Karuna Joshi received the Best Student Paper award at the IEEE International Conference...

https://ebiquity.umbc.edu/paper/html/id/1193/Real-Time-Detection-of-Online-Health-Misinformation-using-an-Integrated-Knowledgegraph-LLM-Approach

https://beta.my.umbc.edu/api/v0/pixel/news/151217/guest@my.umbc.edu/87be4179805dd18174504aefdd4b88c1/api/pixel

ai

knowledge-graph

misinformation

nlp

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

UMBC AI

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/151/217/56a5e670afb49155d0a9e35a1f91f67e/xxlarge.jpg?1753912567

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/151/217/56a5e670afb49155d0a9e35a1f91f67e/xlarge.jpg?1753912567

https://assets3-beta.my.umbc.edu/system/shared/thumbnails/news/000/151/217/56a5e670afb49155d0a9e35a1f91f67e/large.jpg?1753912567

https://assets1-beta.my.umbc.edu/system/shared/thumbnails/news/000/151/217/56a5e670afb49155d0a9e35a1f91f67e/medium.jpg?1753912567

https://assets4-beta.my.umbc.edu/system/shared/thumbnails/news/000/151/217/56a5e670afb49155d0a9e35a1f91f67e/small.jpg?1753912567

https://assets2-beta.my.umbc.edu/system/shared/thumbnails/news/000/151/217/56a5e670afb49155d0a9e35a1f91f67e/xsmall.jpg?1753912567

https://assets2-beta.my.umbc.edu/system/shared/thumbnails/news/000/151/217/56a5e670afb49155d0a9e35a1f91f67e/xxsmall.jpg?1753912567

Paper by Ommo Clark and Karuna Joshi receives award

0

0

true

Wed, 30 Jul 2025 18:20:20 -0400

Tutorial on NeuroSymbolic AI applied to NLP

Material from the AAAI 2025 tutorial

Large Language Models are transforming natural language processing tasks in multiple domains in many ways. Despite their capabilities, their real-world adoption is often limited by issues like the lack of transparency, inadequate understanding of domain protocols, and subpar precision.

Manas Gaur,

Ed Raff, and

Ali Mohammadi were part of the team that organized and presented a half-day tutorial at the 2025 AAAI Conference last month covering the concept of

Neurosymbolic AI and how it can be applied to LLMs to help solve key challenges in NLP tasks like explainability, grounding, and instructability.

You can see their slides and other material

here.

UMBC Center for AI

]]>

Large Language Models are transforming natural language processing tasks in multiple domains in many ways. Despite their capabilities, their real-world adoption is often limited by issues like the...

https://nesy-egi.github.io/

https://beta.my.umbc.edu/api/v0/pixel/news/148186/guest@my.umbc.edu/2c3ececc48c719a462b3d72dabdc0413/api/pixel

ai

llm

nlp

tutorial

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

UMBC AI

0

0

true

Fri, 21 Mar 2025 10:18:25 -0400

Fri, 21 Mar 2025 10:41:51 -0400

Talk: Using Participatory Design and AI to Create Agency-increasing Augmentative and Alternative Communication Systems

3-4pm ET Mon., Nov. 18, 2024 in ITE 406 & online

Talk: Using Participatory Design and AI to Create Agency-increasing Augmentative and Alternative Communication Systems

Dr. Stephanie Valencia, Univ. of Maryland

3-4pm ET Monday, November 18, 2024

ITE 406, UMBC and online

Agency and communication are integral to personal development, enabling us to pursue and express our goals. However, agency in communication is not fixed–Many individuals who use speech-generating devices to communicate encounter social constraints and technical limitations that can restrict what they can say, how they can say it, and when they can contribute to a discussion. In this talk, I will delve into how an agency-centered design approach can foster more accessible communication experiences and help us uncover opportunities for design. Drawing from empirical research and collaborative co-design with people with disabilities, I will highlight how various technological tools—such as automated transcription, physical interaction artifacts, and AI-driven language generation—can impact conversational agency. Additionally, I will share practical design strategies and discuss existing challenges for co-designing communication technologies that enhance user agency and participation.

Dr. Valencia is dedicated to promoting equitable access to assistive technologies (AT), advocating for open-source hardware, and championing the inclusion of underrepresented groups in technology design and development. Dr. Valencia²’s research endeavors are centered on elevating user agency, accessibility, and enjoyment. Employing participatory design methodologies, she has explored the integration of diverse design elements such as artificial intelligence and embodied expressive objects to empower augmentative and alternative communication users. Dr. Valencia² works on conceptualizing these innovations but also in building and deploying them to make a real-world impact. Rigorous empirical studies are an integral part of her work, ensuring that the efficacy and significance of design contributions are thoroughly assessed. She earned her Ph.D. at the Human-computer Interaction Institute at Carnegie Mellon University.

UMBC Center for AI

]]>

Talk: Using Participatory Design and AI to Create Agency-increasing Augmentative and Alternative Communication Systems Dr. Stephanie Valencia, Univ. of Maryland 3-4pm ET Monday, November 18,...

https://my3.my.umbc.edu/groups/langtech/events/136186

https://beta.my.umbc.edu/api/v0/pixel/news/145624/guest@my.umbc.edu/da246d6551d44cb689cad7374ec3aaf5/api/pixel

agency

ai

nlp

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

LARA Lab and Interactive Systems Research Center

0

0

true

Wed, 13 Nov 2024 21:48:26 -0500

Wed, 13 Nov 2024 21:55:47 -0500

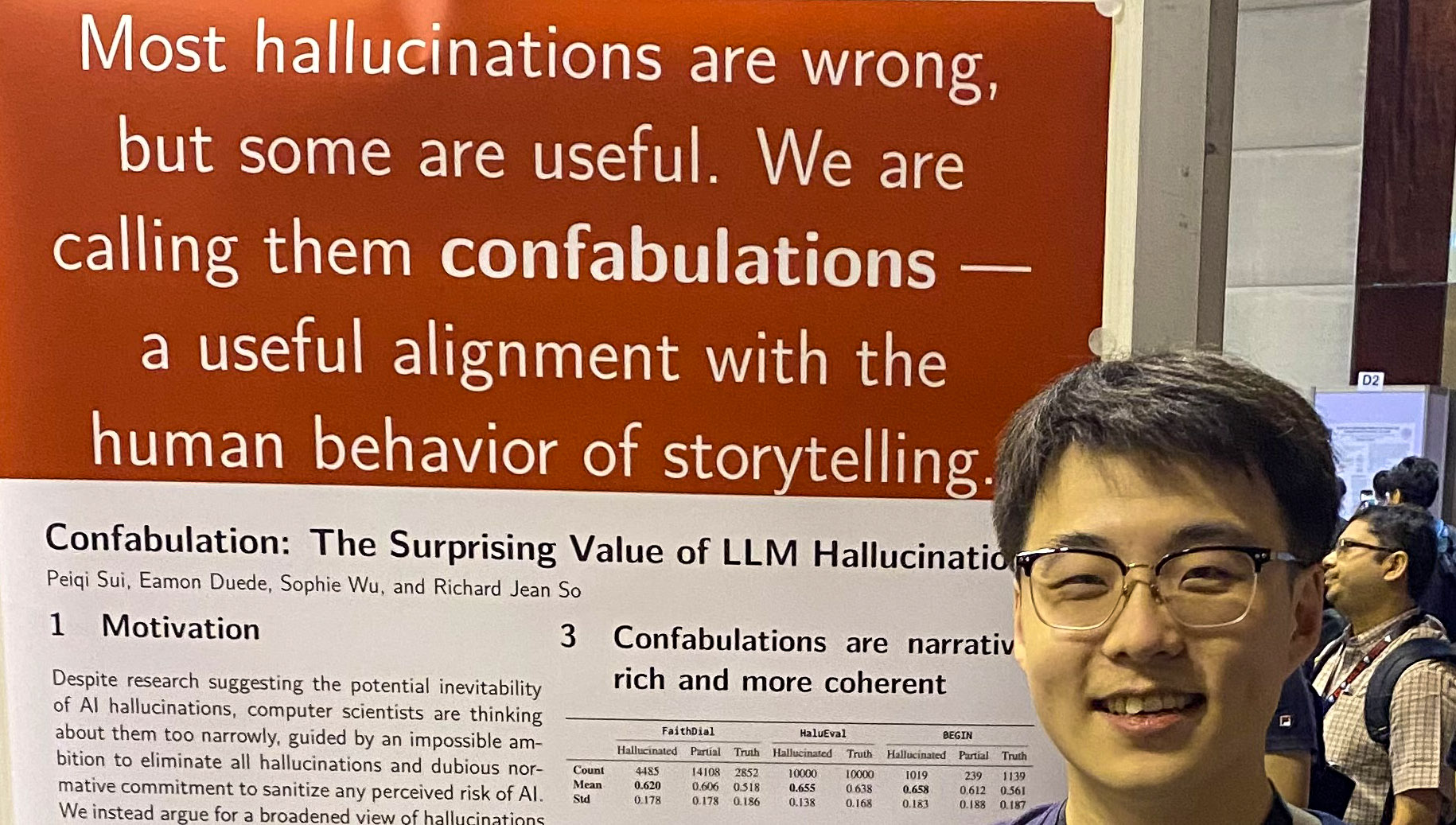

talk: Confabulation: What Could LLM Hallucinations Do For Storytelling? 11/14

11:30-12:50 Thur. Nov. 14, 2024, Sondheim Hall 110 & online

Peiqi "Patrick" Sui will talk on

Confabulation: What Could LLM Hallucinations Do For Storytelling?, 11:30am-12:50pm on Thursday, Nov. 14, 2024 in Sondheim Hall 110 at UMBC and

online.

Are hallucinations always bad? Most of NLP research presumes a normative stance that they are, but it overlooks the cognitive and communicative affordances of a type of particularly story-like hallucinations (which we'll call confabulations). Consider two general categories of LLM applications: using them as tools, or interacting with them as viable cultural agents. The two have very different training objectives in terms of the tradeoff between factuality and alignment with the human behavior of storytelling, and when it comes to ensuring the latter, LLMs that could effectively confabulate would be especially useful. For instance, confabulations could enable LLMs to perform speculative narration and address omissions in history resulting from social injustice, in the hope of enacting what literary theorist Saidiya Hartman calls "critical fabulation" at scale, and giving interactive storytelling a wider social impact.

Patrick Sui is a second-year PhD student in English at McGill University, advised by Richard Jean So. He mainly works in digital humanities and cultural analytics, and spends most of his time thinking about how literary studies could uniquely contribute to AI research about language. His current research topics include benchmarks for close reading & interpretive reasoning, modeling close reading behaviors with information theory, knowledge-grounded style transfer for co-creative systems, AI literacy & writing pedagogy, and all kinds of computational literary theory.

UMBC Center for AI

]]>

Peiqi "Patrick" Sui will talk on Confabulation: What Could LLM Hallucinations Do For Storytelling?, 11:30am-12:50pm on Thursday, Nov. 14, 2024 in Sondheim Hall 110 at UMBC and online. Are...

https://laramartin.net/LaTeSS

https://beta.my.umbc.edu/api/v0/pixel/news/145547/guest@my.umbc.edu/4654d8b43ac207b29c7a91e8e6ca00b8/api/pixel

ai

lara-lab

llm

nlp

storytelling

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

Language, Aid, and Representation AI Lab

0

0

true

Mon, 11 Nov 2024 18:08:30 -0500

Mon, 11 Nov 2024 19:06:30 -0500

Talk today on AI for Event-Centric Video Retrieval, 1:30pm in ITE 325b

If you are interested in a challenging AI problem involving integrated spoken language and video understanding, Reno Kriz from JHU will discuss the results of a large summer project focused on finding videos about specific current events. His presentation will be at 1:30 p.m. today (Tuesday, 10/8) in ITE 325b and also online. Register and get more information here.

]]>

If you are interested in a challenging AI problem involving integrated spoken language and video understanding, Reno Kriz from JHU will discuss the results of a large summer project focused on...

https://my3.my.umbc.edu/groups/langtech/events/134555

https://beta.my.umbc.edu/api/v0/pixel/news/144588/guest@my.umbc.edu/dfbc935fe3eaaed0ea7269f34ad72685/api/pixel

ai

audio

nlp

text

video

vision

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

Language Technology Seminar Series

0

0

true

Tue, 08 Oct 2024 10:10:00 -0400

Talk: Takeaways from the Workshop on Event-Centric Video Retrieval, Oct 8

Reno Kriz, JHU HLTCOE, 1:30-2:30pm EDT, Tue. Oct. 8

Takeaways from the SCALE 2024 Workshop on Event-Centric Video Retrieval

Reno Kriz, JHU HLTCOE

1:30-2:30 pm EDT Tuesday, October 8, 2024

ITE 325b, UMBC and online

Information dissemination for current events has traditionally consisted of professionally collected and produced materials, leading to large collections of well-written news articles and high-quality videos. As a result, most prior work in event analysis and retrieval has focused on leveraging this traditional news content, particularly in English. However, much of the event-centric content today is generated by non-professionals, such as on-the-scene witnesses to events who hastily capture videos and upload them to the internet without further editing; these are challenging to find due to quality variance, as well as a lack of text or speech overlays providing clear descriptions of what is occurring. To address this gap, SCALE 2024, a 10-week research workshop hosted at the Human Language Technology Center of Excellence (HLTCOE), focused on multilingual event-centric video retrieval, or the task of finding videos about specific current events. Around 50 researchers and students participated in this workshop and were split up into five sub-teams. The Infrastructure team focused on developing MultiVENT 2.0, a challenging new video retrieval dataset consisting of 20x more videos than prior work and targeted queries about specific world events across six languages. The other teams worked on improving models from specific modalities, specifically Vision, Optical Character Recognition (OCR), Audio, and Text. Overall, we came away with three primary findings: extracting specific text from a video allows us to take better advantage of powerful methods from the text information retrieval community; LLM summarization of initial text outputs from videos is helpful, especially for noisy text coming from OCR; and no one modality is sufficient, with fusing outputs from all modalities resulting in significantly higher performance.

Reno Kriz is a research scientist at the Johns Hopkins University Human Language Technology Center of Excellence (HLTCOE). His primary research interests involve leveraging large pre-trained models for a variety of natural language understanding tasks, including those crossing into other modalities, e.g., vision and speech understanding. These multimodal interests have recently involved the 2024 Summer Camp for Language Exploration (SCALE) on event-centric video retrieval and understanding. He received his PhD from the University of Pennsylvania, where he worked with Chris Callison-Burch and Marianna Apidianaki on text simplification and natural language generation. Prior to that, he received BA degrees in Computer Science, Mathematics, and Economics from Vassar College.

Part of the UMBC Language Technology Seminar Series

UMBC Center for AI

]]>

Takeaways from the SCALE 2024 Workshop on Event-Centric Video Retrieval Reno Kriz, JHU HLTCOE 1:30-2:30 pm EDT Tuesday, October 8, 2024 ITE 325b, UMBC and online Information dissemination for...

https://my3.my.umbc.edu/groups/langtech/events/134555

https://beta.my.umbc.edu/api/v0/pixel/news/144205/guest@my.umbc.edu/76480d509d15b5184c20f45141e37422/api/pixel

ai

events

language

nlp

video

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

Language Technology Seminar Series

0

0

true

Tue, 24 Sep 2024 18:37:45 -0400

Tue, 24 Sep 2024 19:33:24 -0400

talk: AI Resilient Interfaces for Code Generation and Efficient Reading, Dr. Jonathan Kummerfeld

3-4pm EDT, Tue. 10 Sept. 2024, ITE 325b at UMBC & online

The UMBC Language Technology Seminar Series (LaTeSS – pronounced lattice) showcases talks from experts researching various language technologies, including but not limited to natural language processing, computational linguistics, speech processing, and digital humanities. UMBC people can join the group here.

AI Resilient Interfaces for Code Generation and Efficient Reading

Dr. Jonathan K. Kummerfeld, University of Sydney

3-4 pm EDT Tuesday, 10 Sept. 2024, ITE 325b at UMBC and online via WebEx

AI is being integrated into virtually every computer system we use, but often in ways that mean we cannot see the decisions AI makes for us. If we don't see a decision, we cannot notice whether we agree with it, and what we don't notice, we cannot change. For example, using an AI summarization system means trusting that it has captured all the aspects of a document that are relevant to you. If the task is high stakes, then the only way to check is to read the original document, but that significantly decreases the value of the summary. In this talk, I will present the concept of AI resilient interfaces: systems that use AI while giving users the information they need to notice and change its decisions. I will walk through two examples of novel systems that are more AI resilient than the typical solution to the problem for (1) SQL generation and (2) faster reading. I will conclude with thoughts on the potential and pitfalls of designing with AI resilience in mind.

Jonathan K. Kummerfeld is a Senior Lecturer (i.e., research tenure-track Assistant Professor) in the School of Computer Science at the University of Sydney. He is currently also a DECRAfellow, and collaborates with a range of academics across the world, including on DARPA-funded projects on AI agents that communicate. He completed his Ph.D. at the University of California, Berkeley, and was previously a postdoc at the University of Michigan, and a visiting scholar at Harvard. Jonathan’s research focuses on interactions between people and NLP systems, developing more effective algorithms, workflows, and systems for collaboration. He has been on the program committee for over 50 conferences and workshops. He currently serves as Co-CTO of ACL Rolling Review (a peer review system) and is a standing reviewer for the Computational Linguistics journal and the Transactions of the Association for Computational Linguistics journal.

UMBC Center for AI

]]>

The UMBC Language Technology Seminar Series (LaTeSS – pronounced lattice) showcases talks from experts researching various language technologies, including but not limited to natural language...

https://laramartin.net/LaTeSS

https://beta.my.umbc.edu/api/v0/pixel/news/143688/guest@my.umbc.edu/715a05c531c2ad2dc335d3eeb3715d46/api/pixel

ai

llm

nlp

UMBC AI

https://beta.my.umbc.edu/groups/umbc-ai

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets2-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/original.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xlarge.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/large.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/medium.png?1691095779

https://assets3-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/small.png?1691095779

https://assets4-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xsmall.png?1691095779

https://assets1-beta.my.umbc.edu/system/shared/avatars/groups/000/002/081/cfb27ebe008c2636486089a759ea5c36/xxsmall.png?1691095779

UMBC Language Technology Seminar Series

0

0

true

Sat, 07 Sep 2024 12:03:44 -0400