Talk: Visual Concept Learning Beyond Appearances, 3:30pm 2/8

Modernizing some classic ideas

PPR Distinguished Speaker

Visual Concept Learning Beyond Appearances: Modernizing a Couple of Classic Ideas

Arizona State University

3:30-4:45 pm ET, Thur. Feb. 8, 2024

The goal of

Computer Vision, as coined by

Marr, is to develop algorithms to answer "What are", "Where at", "When from" visual appearance. The speaker, among others, recognizes the importance of studying underlying entities and relations beyond visual appearance, following an

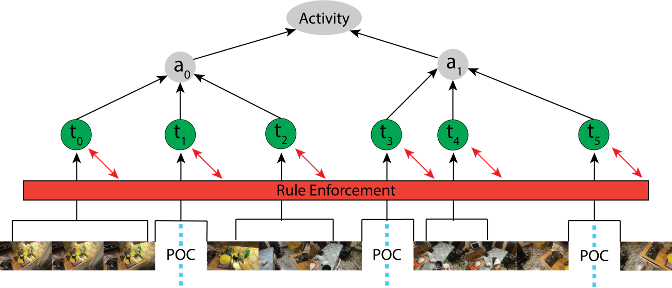

Active Perception paradigm. This talk will present the speaker's efforts over the last decade, ranging from 1) reasoning beyond appearance for vision and language tasks (

VQA,

captioning,

T2I, etc.), and addressing their evaluation misalignment, through 2) reasoning about implicit properties, to 3) their roles in a Robotic visual concept learning framework. The talk will also feature the Active Perception Group (APG)'s projects addressing emerging challenges of the nation in automated mobility and intelligent transportation domains, at the ASU School of Computing and Augmented Intelligence (SCAI).

Yezhou (YZ) Yang is an Associate Professor and a Fulton Entrepreneurial Professor in the School of Computing and Augmented Intelligence (SCAI) at Arizona State University. He founded and directs the ASU Active Perception Group, and currently serves as the topic lead (situation awareness) at the Institute of Automated Mobility, Arizona Commerce Authority. He is also a thrust lead (AVAI) at Advanced Communications Technologies (ACT, a Science and Technology Center under the New Economy Initiative, Arizona). His work includes exploring visual primitives and representation learning in visual (and language) understanding, grounding them by natural language and high-level reasoning over the primitives for intelligent systems, secure/robust AI, and V&L model evaluation alignment. Yang is a recipient of the Qualcomm Innovation Fellowship 2011, the NSF CAREER award 2018, and the Amazon AWS Machine Learning Research Award 2019. He received his Ph.D. from the University of Maryland at College Park, and B.E. from Zhejiang University, China. He is a co- founder of ARGOS Vision Inc, an ASU spin-off company.

The Advances in Perception, Prediction, and Reasoning (PPR) talks are organized and hosted by UMBC Professor

Tejas Gokhale.